Instead of being intuitive, the boundaries become totally arbitrary at best, and misleading at worst. Eventually, the features in the codebase will be conceptually organized around a product that no longer exists, and so everyone will just have to memorize where everything goes. It's possible to manage these conflicts, but it's a big pain.Īnd so, the distance between product features and the code features will drift further and further apart.

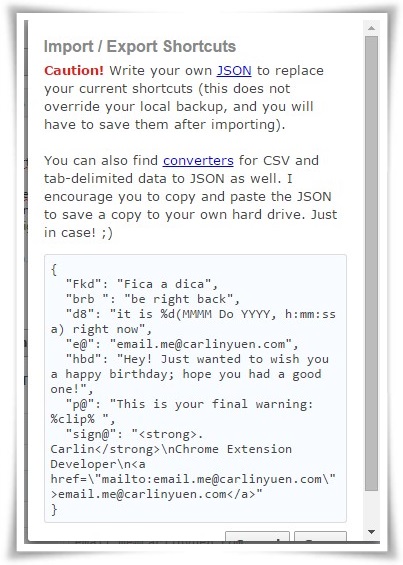

It's too much trouble the team is already working on stuff, and they have a bunch of half-finished PRs, where they're all editing files that will no longer exist if we move all the files around. Realistically, that work won't actually get done. When the product changes, it will require a ton of work to move and rename all the files, to recategorize everything so that it's in harmony with the next version of the product. Products are always evolving and changing, and the boundaries we draw around features today might not make sense tomorrow. Every developer on the project will have their own conceptual model for what should go where, and I'll need to spend time acclimating to their view.Īnd then there's the really big issue: refactoring. When I start work on a new feature, I have to find the files, and they might not be where I expect them to be. Often, the boundaries are blurry, and different developers will make different decisions around what should go where. If we create a component to search for a specific user, is that part of the “search” concern, or the “users” concern? I've worked with a few projects that took this sort of structure, and every time, there have been a few significant sources of friction.Įvery time you create a component, you have to decide where that component belongs. And it makes it easier to quickly get a sense of how the app is structured.īut here's the problem: real life isn't nicely segmented like this, and categorization is actually really hard. It makes it possible to separate low-level reusable “component library” type components from high-level template-style views and pages. Once we update our old office versions, we will start using one of the official JSON parsers for Office.There are things I really like about this. Result = VBA.CallByName(json, key, VbGet) Public Function getJSVal(json As Object, key As String) As Variant ' See the Microsoft Office App Store for the official free JSON-To-Excel converter ' We can't access this directly without installing a parser that converts the JSON to actual variables. Set json = parser.Eval("(" + HTTP.responseText + ")")Īnd ' Grab an object by key name from the JS object we loaded and parsed. 'tRequestHeader "x-header-name", "header-value" Set parser = CreateObject("MSScriptControl.ScriptControl") Set HTTP = CreateObject("MSXML2.XMLHTTP") Public Function ajax(endpoint As String) As Object ' Caveat: no authentication on the request. ' Caveat: we can't directly access the values yet, so use getJSVal() to get the values.

' Since JSON is valid javascript, the JScript parser can eval our JSON string ' JScript was the MS implementation of javascript. ' XMLHttpRequest() is native as MSXML2.XMLHTTP ' Provide URL to a resource, get parsed JSON version back. This worked, but felt very subpar.ģ) In the end, I just wrote an ajax function and a accessor in VBA so we could use them in old versions of excel and word with macros. I guess you're currently doing something similar. In older versions that do not support importing JSON directly, I just imported from web and provided the JSON string as the body of a HTML document. Excel has a Data tab, which allows you to import from JSON in modern versions. This was too difficult for me.Ģ) The second try was an excel document embedded inside the word document. That way we would be able to just concat the JSON data. So my first try was creating a word document.

1) Word ( and excel, probably others as well ) are a form of XML.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed